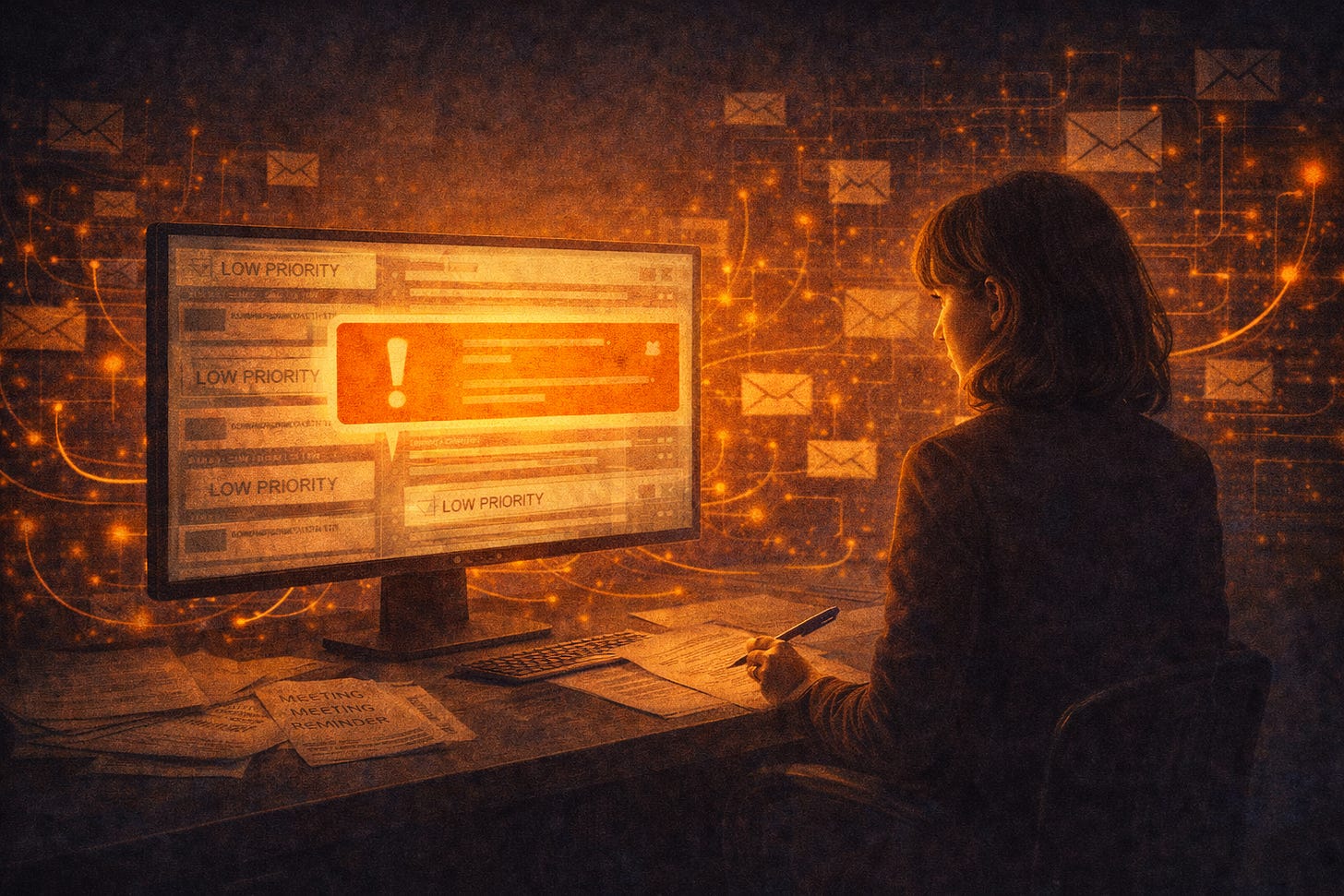

You Have 59 Low-Priority Emails. One of Them Isn't.

Your AI filtered a compliance notice. Your vendor's AI generated it. Neither flagged the gap. In regulated industries, that's not a workflow problem. It's a liability.

It’s Wednesday morning. You haven’t opened your laptop yet. Your AI has already read 63 emails, decided four of them matter, and filed the rest. You start with the four. By 9:30, you’ve cleared them.

Somewhere in the 59 is a security disclosure from a third-party vendor your health system uses for billing. The vendor’s communications team uses AI to generate and distribute notices at scale. The email was formatted like their standard marketing correspondence, sent from the same bulk delivery domain they use for product updates and newsletters. Copilot read the signals correctly. It just didn’t know this message was different.

You find it on Thursday afternoon. By then, the vendor had followed up by phone, concerned no one had responded. The incident they disclosed happened on Tuesday. Your HIPAA-required risk assessment clock started the moment the email arrived.

This is the part of the AI communications story that doesn’t get discussed in the enterprise press.

We’ve been treating AI-generated spam as an inbox nuisance. Something to solve with better filters. But the filters are AI now, too. And the two systems are making decisions about each other without you in the room.

Here’s what the volume actually looks like. Google organic search traffic in the U.S. dropped 38% between November 2024 and November 2025, according to Chartbeat data cited in a January 2026 Reuters Institute report. Major publishers have lost 40 to 55% of their traffic. Some smaller ones have shut down entirely. Google now surfaces AI-generated summaries at the top of results, users stop clicking through, and the economic model that paid humans to write original content quietly collapses.

When the economics of human-written content break because the economics of AI-generated content work better, ad networks don’t distinguish, and algorithms don’t care. You can build an agent that sends email, browses the web, makes phone calls, and negotiates contracts from any laptop, using open-source frameworks, for free. The barrier to entry for mass AI-generated communications is basically zero.

Recently, someone who runs spam infrastructure for one of the largest platforms made a prediction: within 90 days, email, phone calls, and messaging would be so flooded with AI-generated content they’d no longer be reliably usable. Then he bought 1.7 million bot accounts on X to prove the point. They used to be simple, and now they have natural language fluency and payment infrastructure. What’s changed now isn’t sophistication alone. It’s the combination of scale, personalization, and near-zero cost, collapsing simultaneously.

This isn’t just a volume problem. Reinforcement learning from human feedback, RLHF, is how most major language models get trained. You show humans two responses and ask which one they prefer. Repeat that millions of times, and you get a model mathematically optimized to produce content people find compelling. Not accurate. Not helpful. Compelling. The mechanism is the same whether you’re building a helpful assistant or a content generator running spam operations. Every piece of AI-generated content hitting your inbox was built with that same optimization. Your spam filter was not.

Think of it this way. Your spam filter is trying to catch traffic specifically engineered, at the model level, to look like legitimate communications. Then spam got a PhD in looking legitimate while your filter studied for the old exam.

Meanwhile, the filter is getting smarter too. Microsoft’s Copilot “Prioritize My Inbox” feature reached general availability in April 2025. More than 25% of business inboxes now use some form of AI to categorize, prioritize, or triage incoming email. Superhuman, Google Gemini, and a growing range of standalone tools do the same. The pitch is straightforward: let AI decide what you need to read. Reduce cognitive load.

I understand why it exists. The problem isn’t the feature. The problem is what happens at the intersection of a filter trained on your behavioral history and a content environment trained to exploit behavioral patterns.

AI inbox prioritization learns what you respond to. It models your patterns. But organizational risk does not follow your patterns. Breach notifications don’t follow your patterns. Regulatory notices don’t follow your patterns. A compliance disclosure sent at scale, from a bulk delivery domain, formatted like every other vendor communication you’ve deprioritized this year, will register to your AI filter exactly as it should: low priority. The AI made a correct call with incorrect consequences.

Microsoft disclosed in early 2026 that a logic error had briefly caused Copilot Chat to process and summarize emails labeled Confidential, regardless of sensitivity settings. The fix was deployed quickly, and the disclosure was candid. But the incident points to something worth noting: the AI triage layer is already making decisions about your sensitive communications. The governance controls are still catching up.

There’s no audit log that tells you which emails were deprioritized and why. There’s no notification when a time-sensitive message is sorted away. What you have is a black box that handles 59 of your 63 daily decisions and surfaces the four it thinks matter. When the black box is wrong, you find out from a phone call three days later.

The structure is identical to something I wrote about earlier in this series: Pactum’s AI negotiating purchasing contracts for Walmart and Maersk while the suppliers’ systems responded on the other end. The humans set the parameters and stepped away. The accountability gap didn’t stay contained. It compounded. Each system operated correctly within its own design. Neither was built to flag when the combination produced consequences that neither principal anticipated.

The inbox version is quieter. Less visible. An AI generated the message. An AI filtered it. A human never saw it. The interaction logged correctly in both systems. The consequences surfaced in a phone call from a vendor asking why no one responded to the disclosure they sent on Tuesday.

If you’re a VP of IT at a health system, this isn’t abstract. Your organization runs on vendor relationships, compliance timelines, and regulatory correspondence. Your team deployed AI inbox tools because they work: the cognitive load reduction is real. Your vendors deployed AI communications tools because their teams are small, and the volume demands are massive. The two systems talk to each other dozens of times a day. Nobody mapped that conversation before it went live.

Regulators and courts will eventually have to decide whether ‘AI filtered it’ qualifies as constructive receipt of a notice. Existing doctrines around duty to monitor and constructive notice were not written with AI triage in mind. That question is coming. Most organizations aren’t ready for it.

What I Don’t Have Answers To

We don’t yet have a design pattern for an AI filter that is appropriately skeptical without becoming useless. The reason Copilot deprioritizes bulk-formatted email is correct for 98% of bulk-formatted email. The open question is how to handle the 2% that matters without recreating the inbox overload you were trying to escape. Nobody has solved that cleanly.

The liability question is equally unresolved. If your vendor’s communications AI sends a compliance disclosure using a template that triggers your organization’s AI filter, and you miss the notification window, who is responsible? The vendor? Your organization? The filter vendor? Existing frameworks don’t map cleanly onto a chain where neither sender nor receiver is human. That’s a design gap, not just a legal one.

The filter doesn’t know that. The messages most likely to get deprioritized without review are from smaller senders: smaller vendors, nonprofits, community organizations, and individuals. Optimization for signals of legitimacy, bulk domain, generic template, low engagement history, structurally disadvantages low-signal but high-importance senders. It’s not malicious. It’s what happens when nobody asks the system to care about the outliers.

This is being framed as a consumer problem: Is your personal inbox manageable? But that framing misses what’s actually breaking. For organizations still operating on the assumption that a message sent is a message received, the gap has already opened. In healthcare and financial services, it’s a liability problem now, not a future one.

The only communications still carrying a reliable signal are from people you already trust. When the verification layer fails, you fall back to the social layer. The problem for enterprises is that the social layer doesn’t scale, isn’t auditable, and shuts out anyone who hasn’t already earned a relationship with you. Smaller vendors, nonprofits, and individual constituents. They get filtered. They don’t get a callback.

That’s not an inbox problem. It’s an architecture problem.

If you’re thinking about these questions too, I hope you’ll subscribe.

Rachel Ankerholz is an IT Director and writer exploring the intersection of AI ethics, accessibility, and human-centered technology. She writes about who gets included, and who gets left behind, when we build systems.